I started my internship in the OutSystems team at Mediaweb with a mix of excitement and uncertainty.

During my first days, while going through onboarding, I began exploring what other team members were building, especially the projects involving AI. I’ll be honest: I didn’t fully understand most of it at first. But seeing it in action, real applications, and real use cases, was both overwhelming and incredibly motivating. For someone just starting in the OutSystems world, being exposed to this level of innovation so early on was genuinely rewarding.

As I became more familiar with the team and the work, one topic kept coming up again and again: Agentic AI. It wasn’t just a buzzword. It was something people were actively exploring, building, and discussing internally.

So I asked if I could take it on as a challenge. The proposal was simple: understand Agentic AI… and then build my first agent using ODC. But before building anything, I needed to truly understand what it meant.

As part of that journey, I was also asked to present the topic to the team (so they knew I understood the concepts).

This article is a summary of what I learned along the way.

Starting with the Right Question

One of the first things I realized is that most explanations of AI agents focus on tools, frameworks or specific platforms.

That’s useful, but it doesn’t really help you understand what an agent is.

Technologies evolve quickly. Mental models last longer. So instead of starting with tools, I started with a simpler question: “How does an agent actually behave inside a product and what kind of experience does it create?”

What Is an AI Agent (in Practice)

Artificial intelligence agents are becoming a familiar part of modern digital products. They show up in assistants, workflow automation, decision-support features, and increasingly inside the core logic of enterprise systems.

But the term “agent” is often used loosely.

At a practical level, an AI agent is best understood not as a chatbot or a magical interface, but as a system component that combines:

- context;

- instructions;

- decision-making;

- action.

The Agent as an Operational Loop

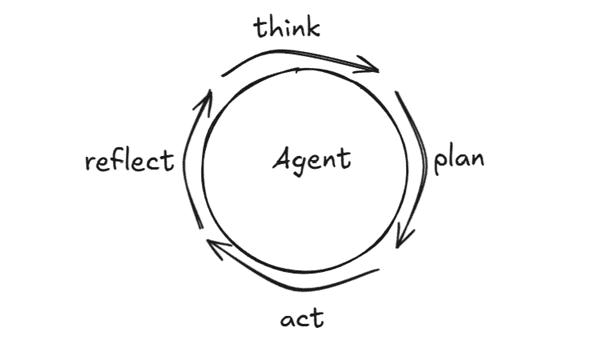

One of the most useful ways I found to understand agents is to think of them as a loop.

An agent receives input, interprets context, decides what to do and produces an action or output.

Sometimes this loop is conversational. Other times, it runs silently in the background, triggered by events, workflows, or user actions.

This distinction matters.

Traditional interfaces are mostly request-and-response: you click and get a result.

Agentic systems introduce something more dynamic. They carry context forward, adapt behavior and influence a sequence of steps rather than just a single interaction.

From a product perspective, this changes the focus. Instead of asking “What happens on this screen?”, it shifts to “How does the system behave across a flow, and how does that shape the experience?”

An Agent Is More Than a Model

One of the first misconceptions I had was thinking the “agent” was just the AI model. It’s not.

A better way to see it is as a system made of:

- an AI model;

- instructions;

- contextual data;

- tools/actions;

- (sometimes) memory.

The model generates responses but the structure around it defines behavior. This shifts the focus from: “What can the model say?”, to: “What is the system actually doing and how does that impact the experience?”

The Boundary Between the System and the World

Another concept that helped clarify things was separating the agent from its environment.

The agent sits at the center of decision-making. Everything else (users, databases, APIs, services) exists around it.

These external elements don’t make the agent smarter. They expand what it can access and do.

That’s an important distinction. More integrations ≠ more intelligence.

What really matters is orchestration:

- when to use each system;

- why to use it;

- how outputs influence the next step.

For product teams, this is a useful reminder: Integrations are infrastructure. Experience is behavior.

Grounding Is What Makes Agents Trustworthy

This was probably the most important concept for me.

Grounding is what separates a cool demo from a reliable system. It means giving the agent access to real, up-to-date, relevant data from your systems.

Without grounding, responses may sound convincing but can be incorrect or misleading. But, with grounding, behavior is tied to reality, decisions reflect actual data and trust increases significantly.

From a UX perspective, this is critical. Users don’t trust systems that sound smart. They trust systems that are consistently right because the experience proves it. Also, more context isn’t always better.

Good grounding is:

- relevant;

- focused;

- current.

Too much noise reduces effectiveness.

Continuity Matters But Memory Isn’t Always Required

One of the biggest improvements agents bring to user experience is continuity. When systems carry forward context, users don't repeat themselves, flows feel coherent and interactions feel natural.

This continuity can come from conversation memory, workflow state or persisted application data. But not every agent needs long-term memory. Some are short-lived, task-specific or intentionally stateless.

The key is not "more memory". It's the right level of continuity for the experience you want to create.

Goals and Instructions Shape Behavior

Every agent operates with a goal. It might be summarizing, recommending, classifying or assisting. Or something broader like being helpful, concise or policy-aligned.

What surprised me is how much behavior depends on instructions. Clear instructions lead to predictable behavior and vague instructions lead to an inconsistent experience.

Most "unpredictable" agents aren't actually unpredictable. They're just poorly defined.

From Reactive Interfaces to Deliberative Systems

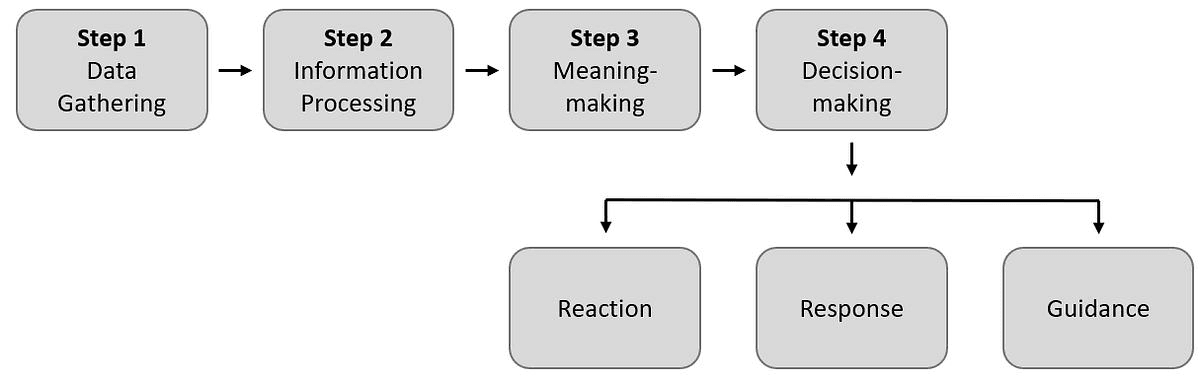

Most systems today are reactive: the user acts and the system responds. Agents introduce deliberation.

Instead of reacting immediately, they can evaluate context, choose between options, decide if more information is needed and break problems into steps.

This introduces new design questions:

- When should the system respond instantly?

- When should it pause?

- When should it ask for clarification?

- How much should it explain?

These are UX decisions, not just technical ones. They shape how the experience feels: responsive, thoughtful, or frustrating.

Tools Expand Capability

(But Don’t Define Intelligence)

Agents often use tools like APIs, search, automation and external services that expand their capability, but these don't define the agent. And more tools don't mean better outcomes.

What matters is using them at the right moment, for the right reason, in the right sequence. The best experiences hide this complexity. Users don't care which system was called. They care that the outcome feels right.

Conclusion

At this point, AI agents stop being abstract concepts. They become something more concrete: systems that operate in loops, shaped by goals and instructions, grounded in real data and interacting with a broader environment.

For me, this mental model made everything clearer. It shifted the focus from "What can AI generate?" to "How does this system behave and what kind of experience does it create?".

What is an AI agent and how is it different from a chatbot?

Unlike chatbots, AI agents combine context, instructions, decision-making and action in a continuous loop. They can run in the background, adapt across multiple steps and influence entire workflows, not just single interactions.

What makes an AI agent reliable and trustworthy?

Grounding. Connecting the agent to real, current data from your systems. Without it, responses may sound convincing but be incorrect. With it, decisions reflect actual data and user trust builds naturally.

Do more integrations make an AI agent smarter?

No. Integrations expand what an agent can access, but intelligence comes from orchestration, knowing when to use each tool, why and how each output shapes the next step.

Why do AI agents sometimes behave unpredictably?

Usually it's not a model problem, it's a definition problem. Vague instructions produce inconsistent behavior. Clear, well-structured instructions lead to predictable, reliable agents.

Does an AI agent always need memory?

No. Some agents are task-specific and intentionally stateless. What matters is the right level of continuity for the experience you want to create, not simply more memory.