After putting together the initial mental model, I started looking at agents differently.

Not as abstract systems but as components that needed to work inside real products, with real constraints. That shift changed the focus completely.

Understanding how an agent works is one thing. Designing one that behaves well in a real experience is something else.

As I moved forward with the challenge and started thinking about building my first agent in ODC, the questions changed. It was no longer just about what an agent is, but “How do we design something that is not only capable but actually usable, reliable, and aligned with real user needs?”

Structure Turns Responses Into Application Logic

One of the first practical lessons was this: free-form text is not enough.

It's great for communication but real applications need something more structured. Once an agent becomes part of a workflow, its output needs to be parsed, validated, stored and reused.

That's where structured outputs become critical. Instead of just generating text, the agent returns classifications, scores, recommendations or decisions.

This is what transforms an agent from “something that talks”, into “something that actively participates in the experience.”

Designing for Uncertainty

Traditional systems aim for certainty.

Agents operate in ambiguity: inputs are incomplete, intent is unclear and context is missing. And that's normal.

So instead of trying to eliminate uncertainty, we design around it. That might mean asking clarifying questions, offering multiple options, constraining decisions to grounded data or introducing review steps.

Interestingly, the most trustworthy systems aren't the ones that always sound confident. They're the ones that handle uncertainty well and reflect that honestly in the experience.

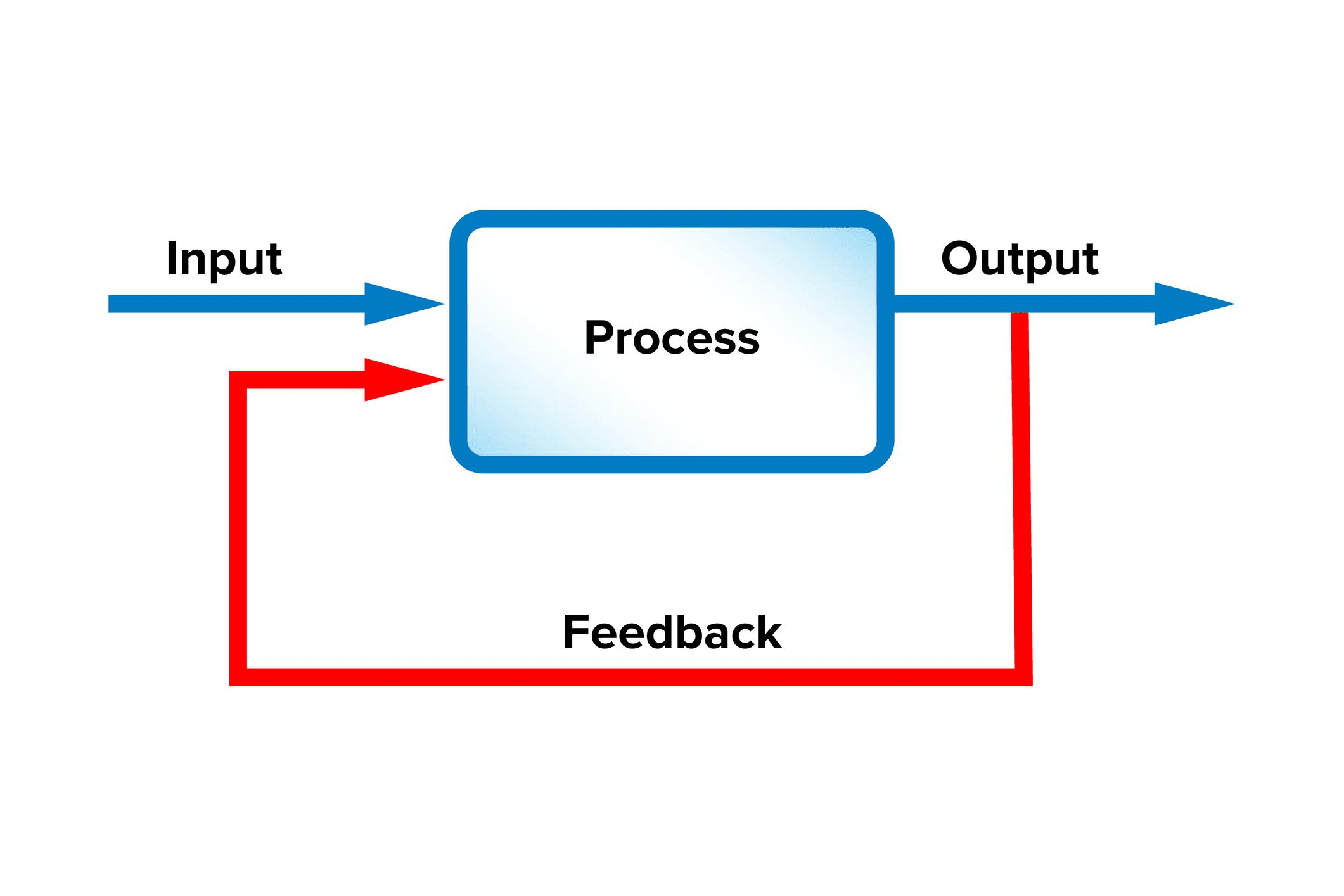

Feedback Shapes the Experience

At first, I thought feedback was mostly about improving the model. But in practice, it’s much more about shaping the experience.

Every time a user corrects something, approves a result, rejects an output, or refines a prompt, they are influencing how the system behaves next. This makes feedback a design problem, not just a technical one.

A good feedback loop feels collaborative, adaptive and responsive. While a poor one feels rigid, repetitive and frustrating.

Breaking Down Complexity

One of the most valuable patterns I noticed is how agents handle complexity.

Instead of solving everything at once, strong systems break work into steps:

- validating input;

- enriching data;

- applying logic;

- generating outputs.

This isn't just good engineering, it's good UX. It allows users to understand what's happening, follow the process and intervene when needed.

Complexity doesn't disappear. It becomes manageable and the experience feels more transparent.

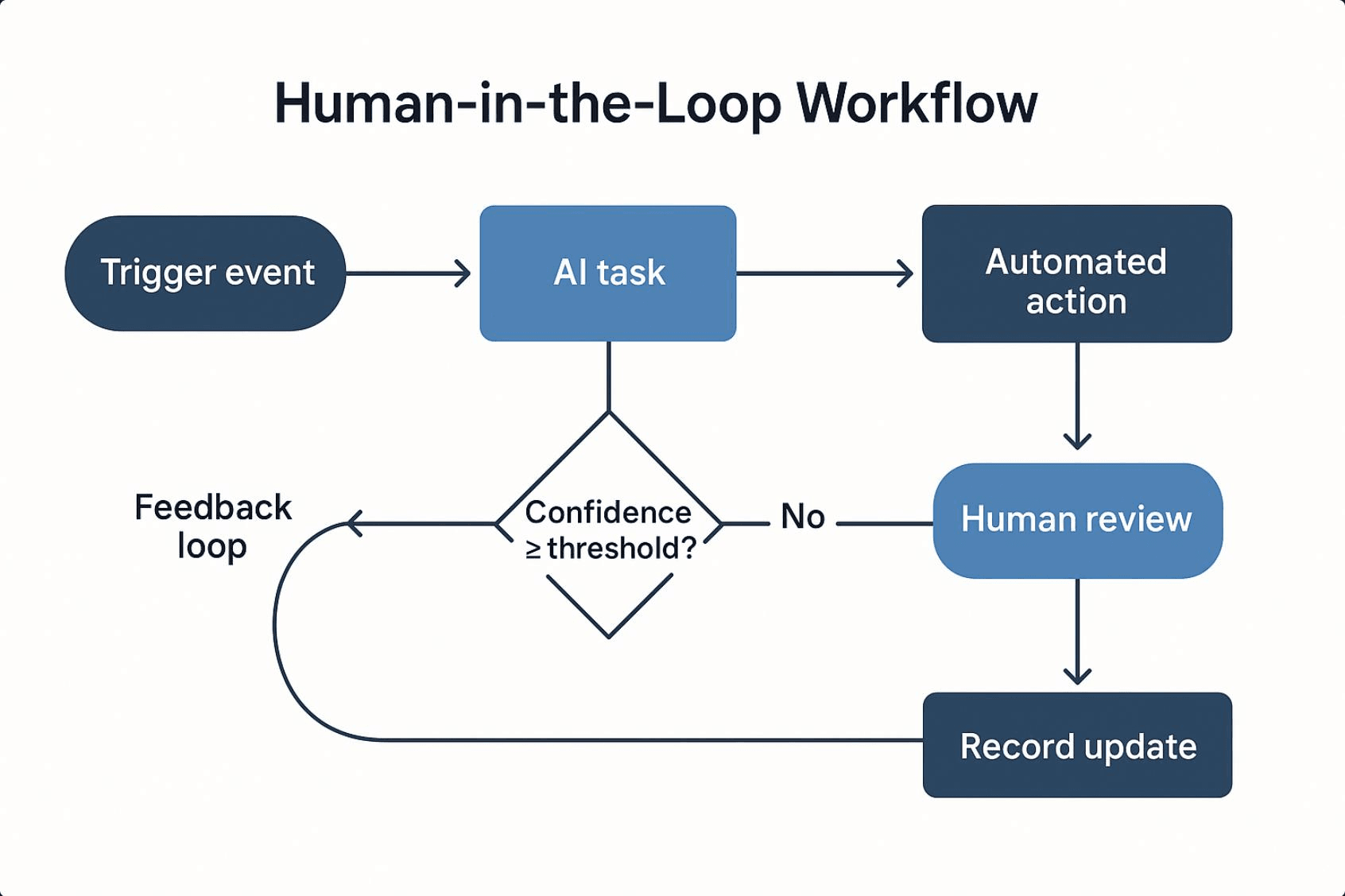

Human Oversight Still Matters

As I explored more use cases, one thing became clear: more autonomy is not always better.

In many scenarios, the best approach is selective autonomy. The agent can analyze, recommend and draft, but a human validates, approves and takes responsibility.

This isn't a limitation, it's good design.

The goal isn't full automation. It's designing the right balance between system capability and human judgment within the experience.

The Real Challenge: Interpretation

This was probably the most subtle insight.

Agents don’t act on user intent. They act on their interpretation of it. And that interpretation depends on:

- context;

- instructions;

- prior state;

- available tools.

Even small misalignments can lead to wrong outcomes.

So the real challenge isn’t just building capability. It’s reducing the gap between what the user means and what the system understands

When that alignment is strong, the experience feels intuitive. When it’s not, it quickly breaks down.

Conclusion

Going through this process completely changed how I see AI agents. They’re not just smart features or advanced interfaces. They’re systems that need to be carefully designed, just like any other part of a product.

What makes them valuable is not just what they can generate, but how they behave:

- how they handle uncertainty;

- how they structure decisions;

- how they interact with users;

- how they fit into real workflows.

For me, the biggest shift was this: designing with AI is not just about capability. It’s about behavior. It’s about the experience.