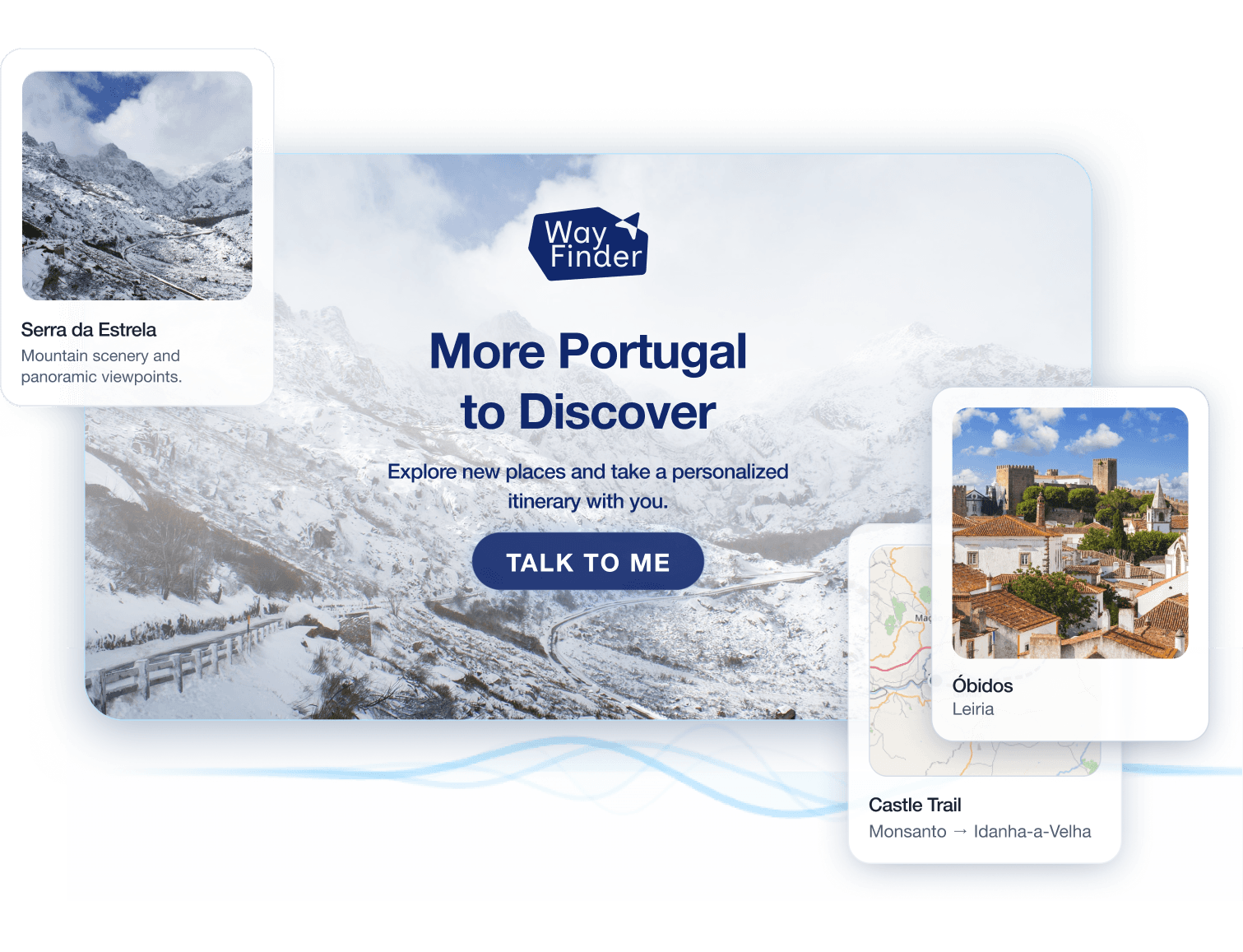

Gen UI Tourism Kiosk

AI responses that feel local and personal, not generic

Crafting a prompt that controls AI behaviour precisely

Extract and structure relevant data

Clean data to ensure model efficiency

Fluent in European Portuguese and other languages

The development of Wayfinder was rooted in the challenge of making AI feel natural in a physical space. From structuring a knowledge base that the agent could genuinely reason over, to designing a conversation flow accessible to any visitor, to building an interface that generates itself in real time. Every decision was guided by one question: does this feel like talking to someone who actually knows the place?

1. Conversational UX Design

We designed the interaction model around a natural conversational flow. The kiosk detects a visitor's presence and opens with short, engaging prompts to quickly understand their interests — culture, nature, gastronomy, or architecture. At the close of each session, the AI generates a tailored itinerary enriched with additional context and insights beyond what was discussed.

2. AI Integration

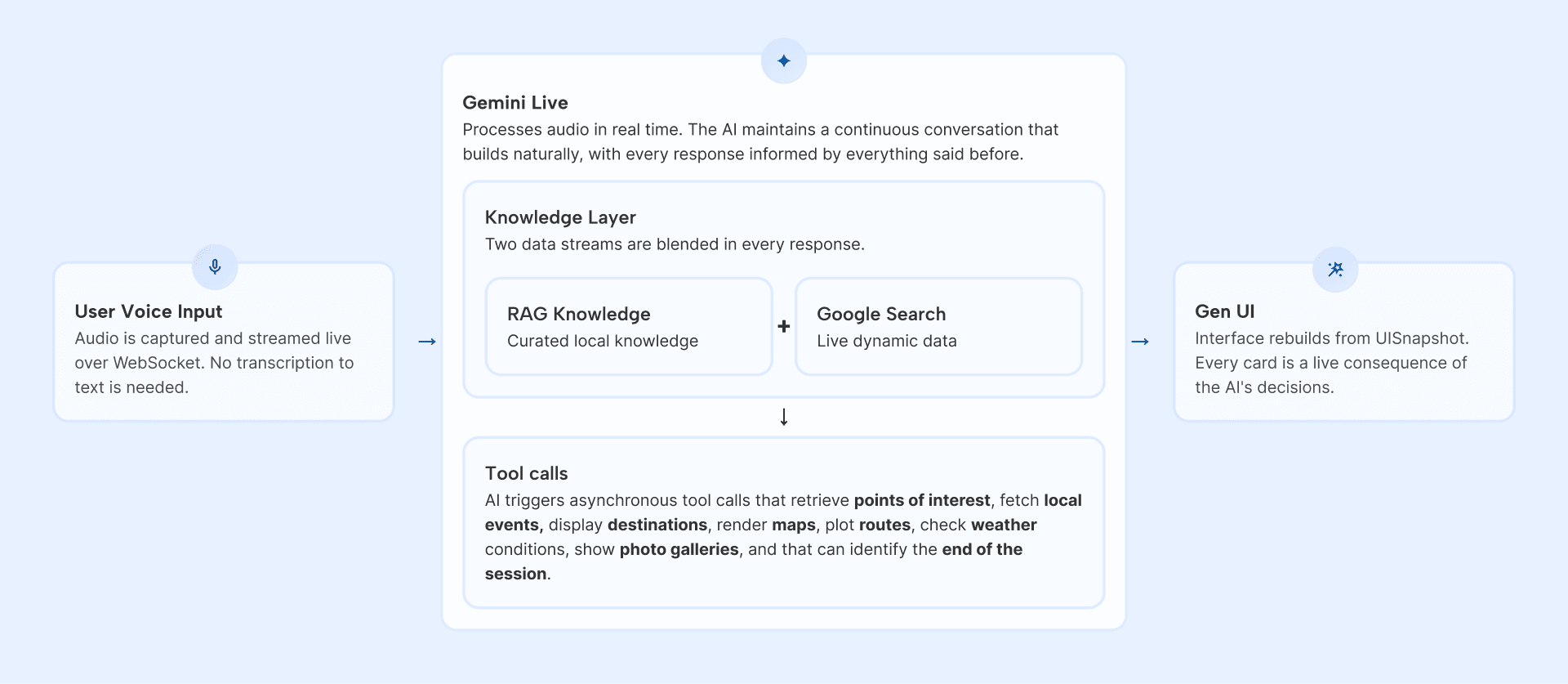

The intelligence layer was built on Google's agent framework, allowing the system to reason simultaneously across points of interest, events, travel logistics, and visitor preferences. The agent is tuned specifically to the region and its destinations, and its knowledge combines a curated RAG base with live Google Search Grounding for dynamic data like events and current conditions.

One of the most compelling parts of the build was the starting point: truly understanding the client's problem. We curated a robust RAG dataset, using multiple LLMs to clean and process the data, discarding around 80% of it. That AI-assisted curation was key: a cleaner foundation meant richer output.

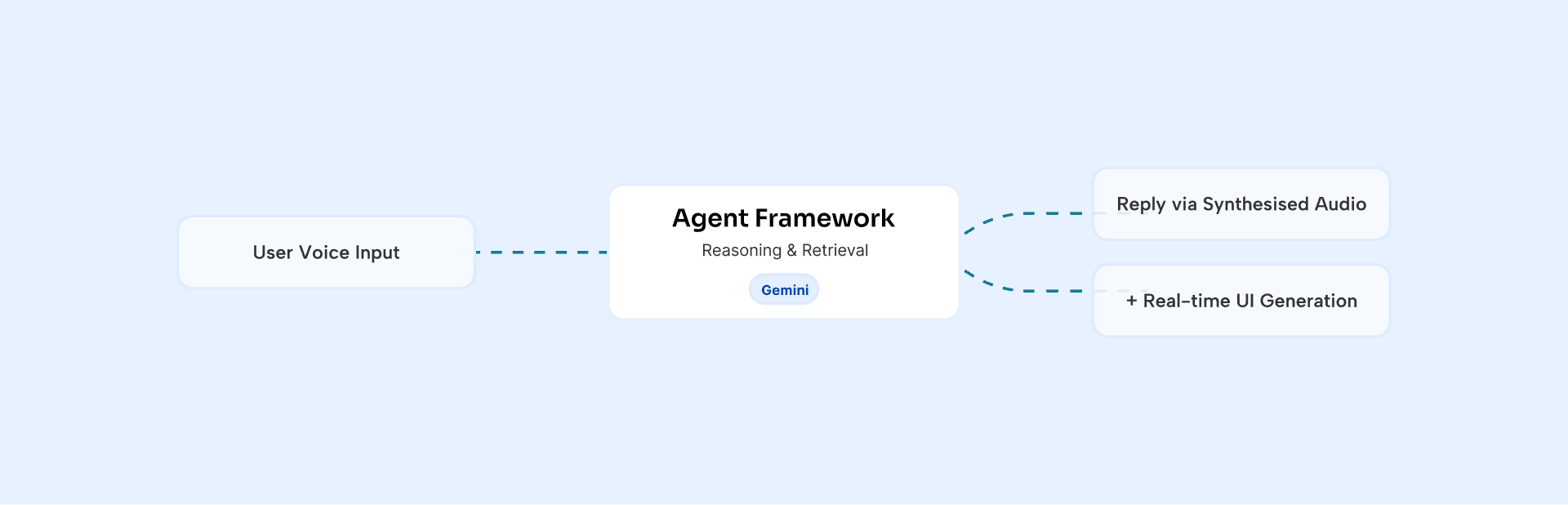

As depicted below, user voice input is captured and streamed live over WebSocket, no transcription required. Gemini Live processes this audio in real time and, based on the conversation, triggers the appropriate tool calls to rebuild the interface dynamically as a direct consequence of its decisions.

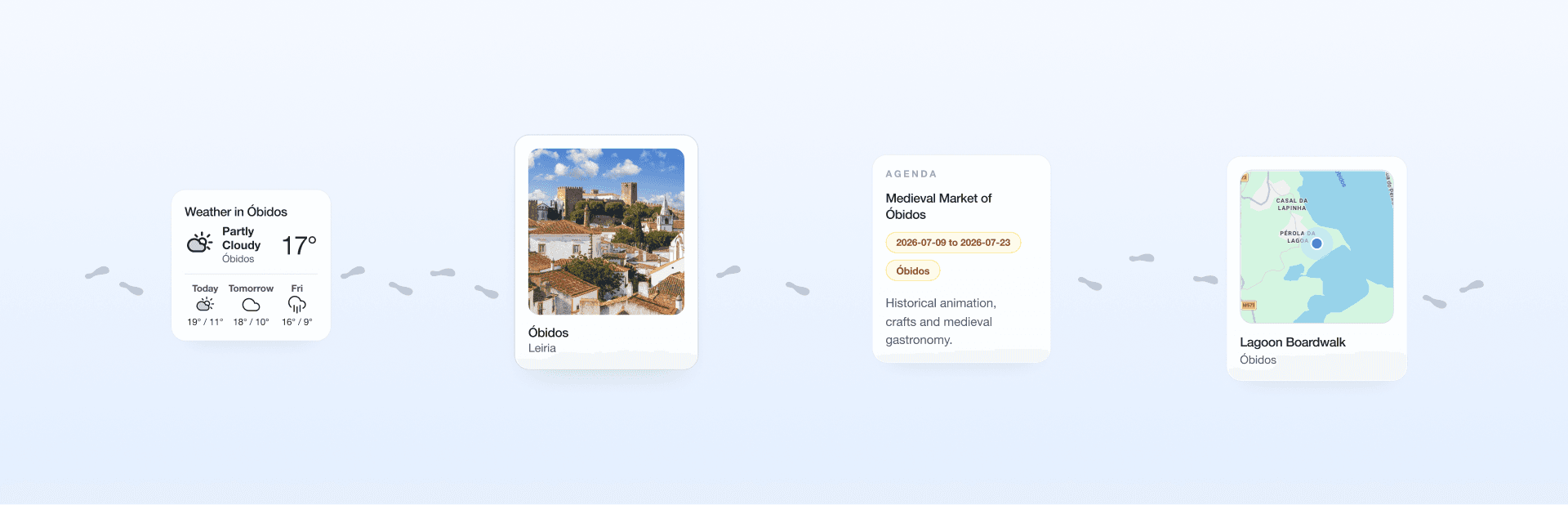

3. Generative UI

The intelligence layer runs on Gemini Live (Vertex AI), a real-time multimodal model that processes and responds in voice directly. Rather than following a scripted flow, the AI drives the entire experience through tool calls — deciding when to show a map, pull up a gallery, or present a destination card. The interface rebuilds entirely from each decision, meaning no screen was ever designed in advance; what the visitor sees is always a live consequence of the conversation.

This wasn't part of the original brief. The generative interface, where each AI response adds a new card to a growing visual timeline, was conceived and built as an extension of the project's core idea, because it felt like the only honest way to represent what the system was actually doing.